OECD (The Organisation for Economic Co-operation and Development) regularly publishes country data for various financial institutions to understand the financial growth of a nation. This data is provided through an API like below and returns a huge JSON (sometimes heavily nested but evenly distributed)

Response of the above api for duration of 2 year is below :

{"header":{"id":"a0bc66c7-5c2f-4ab9-aa99-e0dacdc89552","test":false,"prepared":"2020-05-26T10:50:06.8781106Z","sender":{"id":"OECD","name":"Organisation for Economic Co-operation and Development"},"links":[{"href":"https://stats.oecd.org:443/sdmx-json/data/QNA/AUS+AUT.GDP+B1_GE.CUR+VOBARSA.Q/all?startTime=2009-Q2&endTime=2011-Q4&detail=serieskeysonly","rel":"request"}]},"dataSets":[{"action":"Information","series":{"0:0:0:0":{"observations":{"0":[null],"1":[null],"2":[null],"3":[null],"4":[null],"5":[null],"6":[null],"7":[null],"8":[null],"9":[null],"10":[null]}},"1:0:0:0":{"observations":{"0":[null],"1":[null],"2":[null],"3":[null],"4":[null],"5":[null],"6":[null],"7":[null],"8":[null],"9":[null],"10":[null]}}}}],"structure":{"links":[{"href":"https://stats.oecd.org/sdmx-json/dataflow/QNA/all","rel":"dataflow"}],"name":"Quarterly National Accounts","description":"Quarterly National Accounts","dimensions":{"series":[{"keyPosition":0,"id":"LOCATION","name":"Country","values":[{"id":"AUS","name":"Australia"},{"id":"AUT","name":"Austria"}],"role":"REF_AREA"},{"keyPosition":1,"id":"SUBJECT","name":"Subject","values":[{"id":"B1_GE","name":"Gross domestic product - expenditure approach"}]},{"keyPosition":2,"id":"MEASURE","name":"Measure","values":[{"id":"VOBARSA","name":"National currency, volume estimates, OECD reference year, annual levels, seasonally adjusted"}]},{"keyPosition":3,"id":"FREQUENCY","name":"Frequency","values":[{"id":"Q","name":"Quarterly"}],"role":"FREQ"}],"observation":[{"id":"TIME_PERIOD","name":"Period","values":[{"id":"2009-Q2","name":"Q2-2009"},{"id":"2009-Q3","name":"Q3-2009"},{"id":"2009-Q4","name":"Q4-2009"},{"id":"2010-Q1","name":"Q1-2010"},{"id":"2010-Q2","name":"Q2-2010"},{"id":"2010-Q3","name":"Q3-2010"},{"id":"2010-Q4","name":"Q4-2010"},{"id":"2011-Q1","name":"Q1-2011"},{"id":"2011-Q2","name":"Q2-2011"},{"id":"2011-Q3","name":"Q3-2011"},{"id":"2011-Q4","name":"Q4-2011"}],"role":"TIME_PERIOD"}]},"annotations":[{"title":"Copyright OECD - All rights reserved","uri":"","text":""},{"title":"Terms and Conditions","uri":"http://www.oecd.org/termsandconditions/","text":""},{"title":"Privacy Policy","uri":"http://www.oecd.org/privacy/","text":""},{"title":"MyOECD","uri":"https://www.oecd.org/login","text":""},{"title":"Contact Us","uri":"http://www.oecd.org/contact/","text":""}]}}

Above response result is a small sample, but these API’s sometimes return huge JSON data as well, So the Problem Statement is :

Assume large JSON responses are being returned, How will you find the max depth of this response JSON in best possible way with using optimal resources? Assume your application may not have enough memory to load these complete JSON into memory due to memory constraint.

I will discuss three approaches to solve this in JAVA.

Simple Approach : Use JSONObject Class from org.json.jar , it has Constructor to parse the String which converts into JSON Object, now simply traverse this JSONObject to find depth. But this solution will fail as it does not cater to memory constraints.

Traditional Approach: Traverse the Json character by character and increment depth count by one whenever you encounter opening brace.

Since these JSON may not fit into memory , even if we assume that some responses do fit into memory, but still it will be dangerous to flatten deeply nested JSON objects recursively, as their are limits to the depth of stack as they could result in stack overflow errors.

Also from performance standpoint, recursion is usually slower than an iterative solution when the stack frames are large in number.

Even the JSON would have been small, Pseudo Algorithm would have been something like this:

1) Maintain a total_depth counter variable , nested_depth counter variable

2) Traverse the JSON, follow the below conditions

a) If current character is '{' increment the nested depth count and total_depth count if a new depth is encountered.

b) If character is ‘}’. Check if nested_depth is > 0 as that means we previously had a ‘}’ character so decrement the nested_depth counter.

c) Repeat steps a & b until you reach the end. once you reach the end return the total_depth counter.

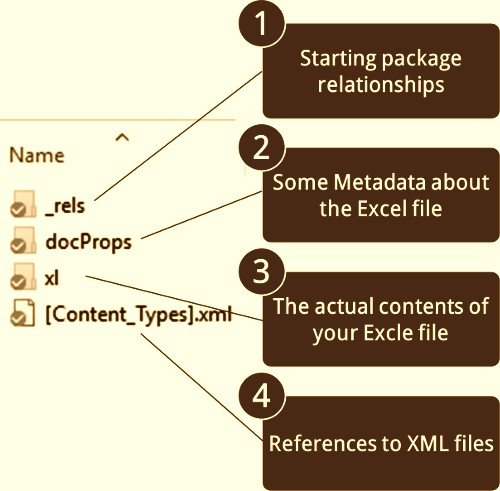

Optimal Approach : Let me give you a Hint ! (See the below Diagram)

|

| Figure :Parallel Processing |

Use the above approach but in a multi-threaded environment clubbing the Producer-Consumer Approach.

I have written a set of utilities for Parallel Processing below is the link for it :

The above abstract class is extended with a maxDepth variable of type AtomicInteger as multiple threads are setting its value . Also a level object is needed which holds the depthValue and one unit of JSON (i.e everything between ‘{’ to ‘}’ including nested JSON’s) as it member variables.

This level object is then pushed in to a Blocking Queue (of defined size say 100 to handle memory constraint). Now One task thread is dedicatedly used to create the above level objects with single unit of JSON & depthValue and push it into the Blocking Queue till it gets full or it reaches the end of the JSON Response(It can then join the remaining task threads for processing).

And the remaining task thread assume dual role of consuming/processing (setting up the depth etc) as well pushing it back into the queue.

If the JSON encountered is a nested one then they simply create a new level object with depthValue incremented by 1 and the nested json(Here we also increment the maxDepth variable as well).

The above task executors poll continuously and process the contained JSON, and if they find the nested JSON embedded in it, they put it back into the Blocking Queue with the depth value incremented and the nested json.

So at a time say 10 threads do the depth finding task in parallel. Thus parallel processor framework can put a cap on memory and utilizes the CPU cores effectively.

There might be multiple approaches to solve this problem, this was asked in a BlueOptima Software Engineer interview.